Quickstart

In this guide we'll go over the basic ways to create a Q&A chain and agent over a SQL database. These systems will allow us to ask a question about the data in a SQL database and get back a natural language answer. The main difference between the two is that our agent can query the database in a loop as many time as it needs to answer the question.

⚠️ Security note ⚠️

Building Q&A systems of SQL databases can require executing model-generated SQL queries. There are inherent risks in doing this. Make sure that your database connection permissions are always scoped as narrowly as possible for your chain/agent's needs. This will mitigate though not eliminate the risks of building a model-driven system. For more on general security best practices, see here.

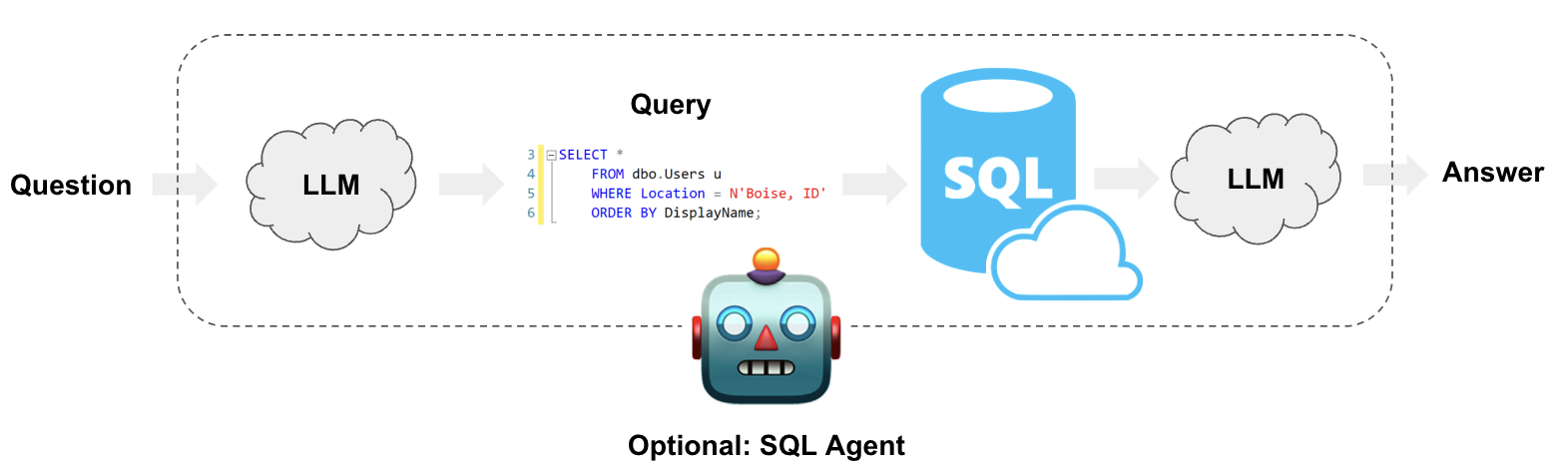

Architecture

At a high-level, the steps of most SQL chain and agent are:

- Convert question to SQL query: Model converts user input to a SQL query.

- Execute SQL query: Execute the SQL query

- Answer the question: Model responds to user input using the query results.

Setup

First, get required packages and set environment variables:

- npm

- Yarn

- pnpm

npm i langchain @langchain/community @langchain/openai

yarn add langchain @langchain/community @langchain/openai

pnpm add langchain @langchain/community @langchain/openai

We default to OpenAI models in this guide.

export OPENAI_API_KEY=<your key>

# Uncomment the below to use LangSmith. Not required, but recommended for debugging and observability.

# export LANGCHAIN_API_KEY=<your key>

# export LANGCHAIN_TRACING_V2=true

import { SqlDatabase } from "langchain/sql_db";

import { DataSource } from "typeorm";

const datasource = new DataSource({

type: "sqlite",

database: "../../../../Chinook.db",

});

const db = await SqlDatabase.fromDataSourceParams({

appDataSource: datasource,

});

console.log(db.allTables.map((t) => t.tableName));

/**

[

'Album', 'Artist',

'Customer', 'Employee',

'Genre', 'Invoice',

'InvoiceLine', 'MediaType',

'Playlist', 'PlaylistTrack',

'Track'

]

*/

API Reference:

- SqlDatabase from

langchain/sql_db

Great! We've got a SQL database that we can query. Now let's try hooking it up to an LLM.

Chain

Let's create a simple chain that takes a question, turns it into a SQL query, executes the query, and uses the result to answer the original question.

Convert question to SQL query

The first step in a SQL chain or agent is to take the user input and convert it to a SQL query. LangChain comes with a built-in chain for this: createSqlQueryChain

import { ChatOpenAI } from "@langchain/openai";

import { createSqlQueryChain } from "langchain/chains/sql_db";

import { SqlDatabase } from "langchain/sql_db";

import { DataSource } from "typeorm";

const datasource = new DataSource({

type: "sqlite",

database: "../../../../Chinook.db",

});

const db = await SqlDatabase.fromDataSourceParams({

appDataSource: datasource,

});

const llm = new ChatOpenAI({ model: "gpt-4", temperature: 0 });

const chain = await createSqlQueryChain({

llm,

db,

dialect: "sqlite",

});

const response = await chain.invoke({

question: "How many employees are there?",

});

console.log("response", response);

/**

response SELECT COUNT(*) FROM "Employee"

*/

console.log("db run result", await db.run(response));

/**

db run result [{"COUNT(*)":8}]

*/

API Reference:

- ChatOpenAI from

@langchain/openai - createSqlQueryChain from

langchain/chains/sql_db - SqlDatabase from

langchain/sql_db

We can look at the LangSmith trace to get a better understanding of what this chain is doing. We can also inspect the chain directly for its prompts. Looking at the prompt (below), we can see that it is:

- Dialect-specific. In this case it references SQLite explicitly.

- Has definitions for all the available tables.

- Has three examples rows for each table.

This technique is inspired by papers like this, which suggest showing examples rows and being explicit about tables improves performance. We can also inspect the full prompt via the LangSmith trace:

Execute SQL query

Now that we've generated a SQL query, we'll want to execute it. This is the most dangerous part of creating a SQL chain. Consider carefully if it is OK to run automated queries over your data. Minimize the database connection permissions as much as possible. Consider adding a human approval step to you chains before query execution (see below).

We can use the QuerySqlTool to easily add query execution to our chain:

import { ChatOpenAI } from "@langchain/openai";

import { createSqlQueryChain } from "langchain/chains/sql_db";

import { SqlDatabase } from "langchain/sql_db";

import { DataSource } from "typeorm";

import { QuerySqlTool } from "langchain/tools/sql";

const datasource = new DataSource({

type: "sqlite",

database: "../../../../Chinook.db",

});

const db = await SqlDatabase.fromDataSourceParams({

appDataSource: datasource,

});

const llm = new ChatOpenAI({ model: "gpt-4", temperature: 0 });

const executeQuery = new QuerySqlTool(db);

const writeQuery = await createSqlQueryChain({

llm,

db,

dialect: "sqlite",

});

const chain = writeQuery.pipe(executeQuery);

console.log(await chain.invoke({ question: "How many employees are there" }));

/**

[{"COUNT(*)":8}]

*/

API Reference:

- ChatOpenAI from

@langchain/openai - createSqlQueryChain from

langchain/chains/sql_db - SqlDatabase from

langchain/sql_db - QuerySqlTool from

langchain/tools/sql

See a LangSmith trace of the chain above here.

Answer the question

Now that we have a way to automatically generate and execute queries, we just need to combine the original question and SQL query result to generate a final answer. We can do this by passing question and result to the LLM once more:

import { ChatOpenAI } from "@langchain/openai";

import { createSqlQueryChain } from "langchain/chains/sql_db";

import { SqlDatabase } from "langchain/sql_db";

import { DataSource } from "typeorm";

import { QuerySqlTool } from "langchain/tools/sql";

import { PromptTemplate } from "@langchain/core/prompts";

import { StringOutputParser } from "@langchain/core/output_parsers";

import {

RunnablePassthrough,

RunnableSequence,

} from "@langchain/core/runnables";

const datasource = new DataSource({

type: "sqlite",

database: "../../../../Chinook.db",

});

const db = await SqlDatabase.fromDataSourceParams({

appDataSource: datasource,

});

const llm = new ChatOpenAI({ model: "gpt-4", temperature: 0 });

const executeQuery = new QuerySqlTool(db);

const writeQuery = await createSqlQueryChain({

llm,

db,

dialect: "sqlite",

});

const answerPrompt =

PromptTemplate.fromTemplate(`Given the following user question, corresponding SQL query, and SQL result, answer the user question.

Question: {question}

SQL Query: {query}

SQL Result: {result}

Answer: `);

const answerChain = answerPrompt.pipe(llm).pipe(new StringOutputParser());

const chain = RunnableSequence.from([

RunnablePassthrough.assign({ query: writeQuery }).assign({

result: (i: { query: string }) => executeQuery.invoke(i.query),

}),

answerChain,

]);

console.log(await chain.invoke({ question: "How many employees are there" }));

/**

There are 8 employees.

*/

API Reference:

- ChatOpenAI from

@langchain/openai - createSqlQueryChain from

langchain/chains/sql_db - SqlDatabase from

langchain/sql_db - QuerySqlTool from

langchain/tools/sql - PromptTemplate from

@langchain/core/prompts - StringOutputParser from

@langchain/core/output_parsers - RunnablePassthrough from

@langchain/core/runnables - RunnableSequence from

@langchain/core/runnables

See a LangSmith trace of the chain above here.

Next steps

For more complex query-generation, we may want to create few-shot prompts or add query-checking steps. For advanced techniques like this and more check out:

- Prompting strategies: Advanced prompt engineering techniques.

- Query checking: Add query validation and error handling.

- Large databases: Techniques for working with large databases.

Agents

LangChain offers a number of tools and functions that allow you to create SQL Agents which can provide a more flexible way of interacting with SQL databases. The main advantages of using SQL Agents are:

- It can answer questions based on the databases' schema as well as on the databases' content (like describing a specific table).

- It can recover from errors by running a generated query, catching the traceback and regenerating it correctly.

- It can answer questions that require multiple dependent queries.

- It will save tokens by only considering the schema from relevant tables.

- To initialize the agent, we use

createOpenAIToolsAgentfunction. This agent contains theSqlToolkitwhich contains tools to: - Create and execute queries

- Check query syntax

- Retrieve table descriptions

- … and more